Train a K-Means Clustering Model with Scikit-Learn¶

This tutorial demonstrates how to train a K-Means Clustering 1 model using Scikit-Learn. 2

1. Acquire Training Data¶

Unsupervised learning 3 is a technique that takes in unlabeled training data, and generates its own labels for a dataset. Oftentimes, it is used as an exploratory tool to find collections of similar items. For example, to find molecules or crystals with similar properties.

The data used in this example was acquired from Kaggle 4.

It consists of a group of 21,263 superconductors, along with the following properties:

- Atomic Mass (AMU)

- First Ionization Energy (kJ/mol)

- Atomic Radius (pm)

- Density (kg/m^3)

- Electron Affinity (kJ/mol)

- Fusion Heat (kJ/mol)

- Thermal Conductivity (kJ/mol)

- Valence (number of bonds)

For each property, various properties including mean, the weighted mean, and standard deviation are calculated. The dataset was originally posted to Kaggle to pose the regression problem of predicting a superconductor's critical temperature 5, but for our purposes, we will train a clustering model to separate the superconductors into several groups.

Due to the filesize limits imposed by our upload system (20 MB), we will truncate at 15,000 examples, for a 16 MB training set. For convenience, we have processed this file to meet our upload constraint; download it it here.

2. Upload the Training Data¶

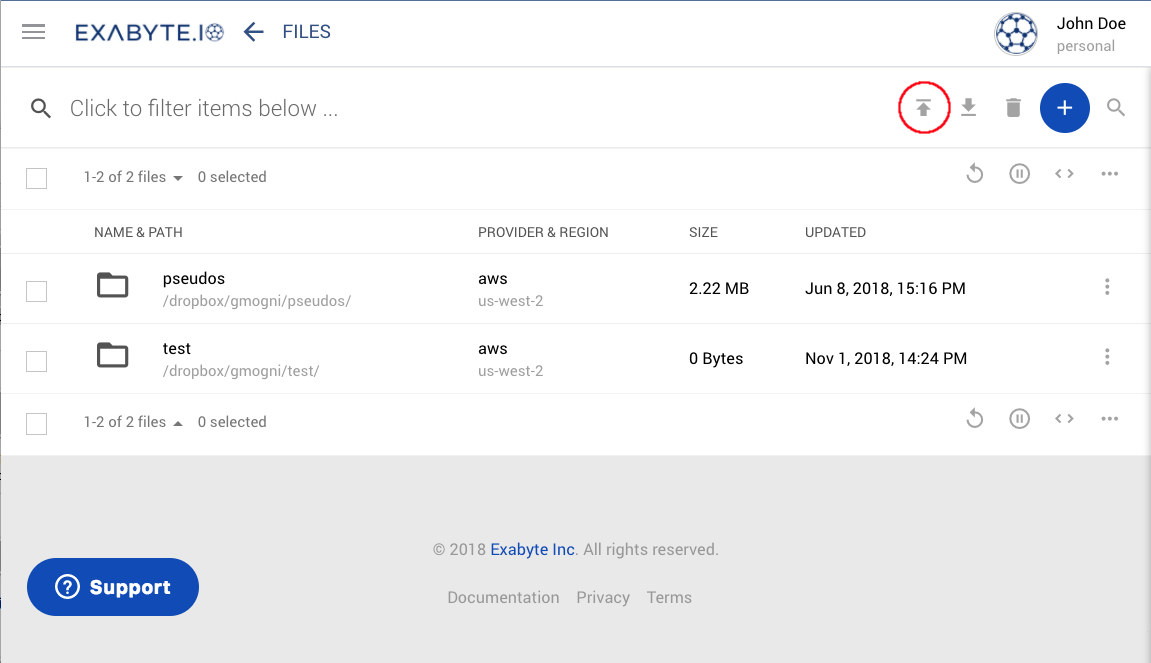

In order to upload training data, we first click the Dropbox button in the left sidebar.

This will bring us to the Dropbox Page. We can then click the "Upload" button, circled

below:

Then, when the browser's upload window appears, we navigate to where we downloaded the file in section 1, and select it for upload. If the upload was successful, the file will then be visible in the dropbox.

3. Copy the "Python ML Train Clustering" Workflow from the Workflow Bank¶

Next, we select theBank Worfklows button in the left sidebar, which brings us to

the Bank Workflows Page. We then search for the "Python ML Train Clustering" workflow owned

by the "Curators" account, and copy it to our account.

A diagram and detailed description of this workflow can be found here.

4. Create the ML Job¶

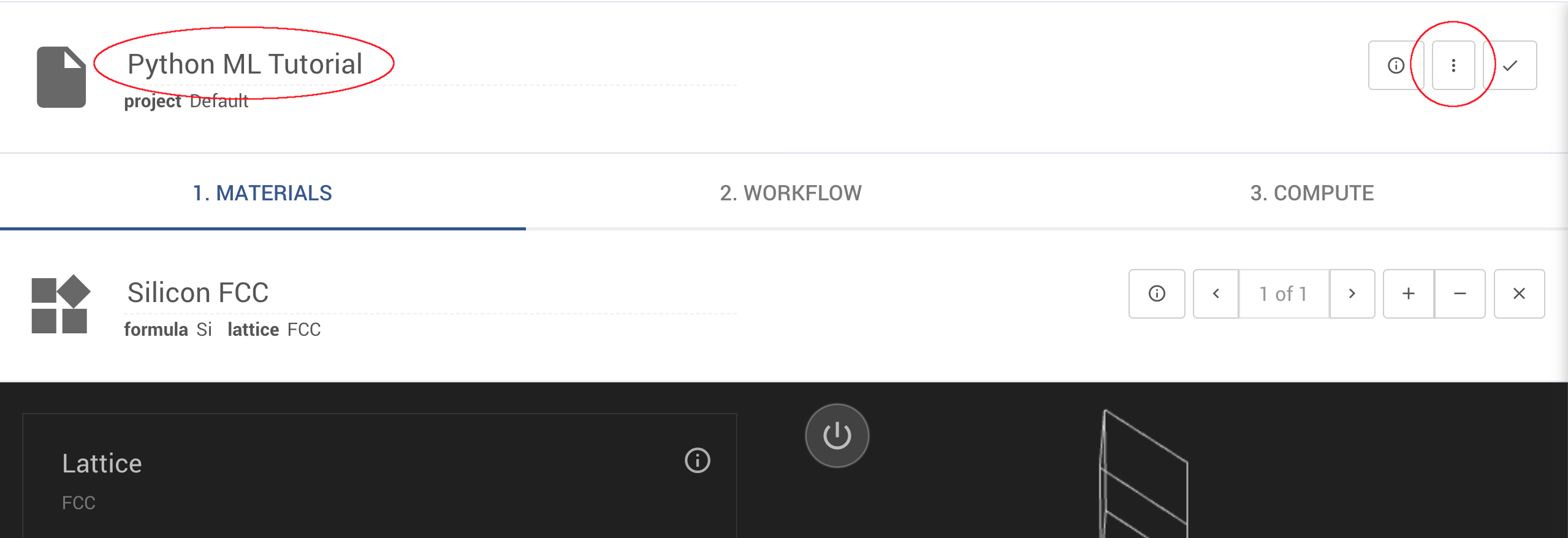

Next, we can create a new job by selecting the Create Job button in the left sidebar. This

will bring us to a new job on the Job Designer page.

First, we will give the job a friendly name, such as "Python ML Tutorial" (see below). Then, we will click the Actions Button (the three vertical dots in the upper-right of the job designer), and choose "Select Workflow."

This will bring up the Select Workflow dialogue. We then search for "Python ML Train Clustering" and select it.

5. Select the Dataset¶

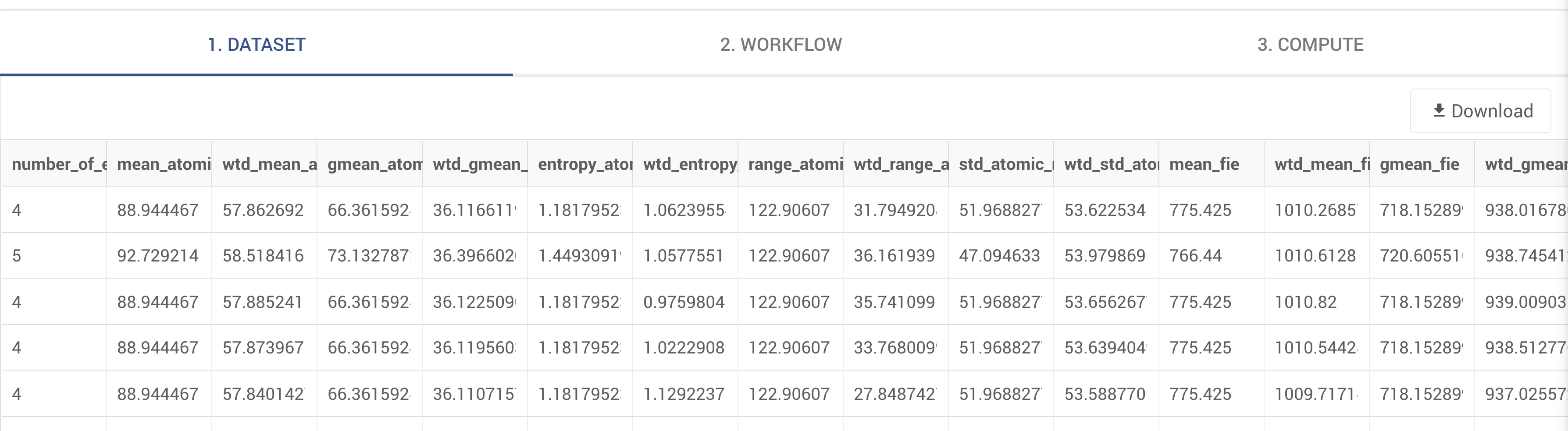

The job designer changes now that our ML Training workflow is selected. The "Materials" tab has now been replaced with a "Dataset" tab. Just as the "Materials" tab shows a preview of the materials the job will use, the "Dataset" tab shows a preview of the dataset once it is selected.

To select a dataset, click the Actions Button (the three vertical dots in the upper-right of the job designer) and choose "Select Dataset." This will bring up a files explorer containing all files presently on the dropbox. Choose the training set we uploaded earlier, "clustering_data.csv."

A preview of the data then appears on the dataset tab, indicating that the data has successfully been loaded.

6. Configure the Workflow¶

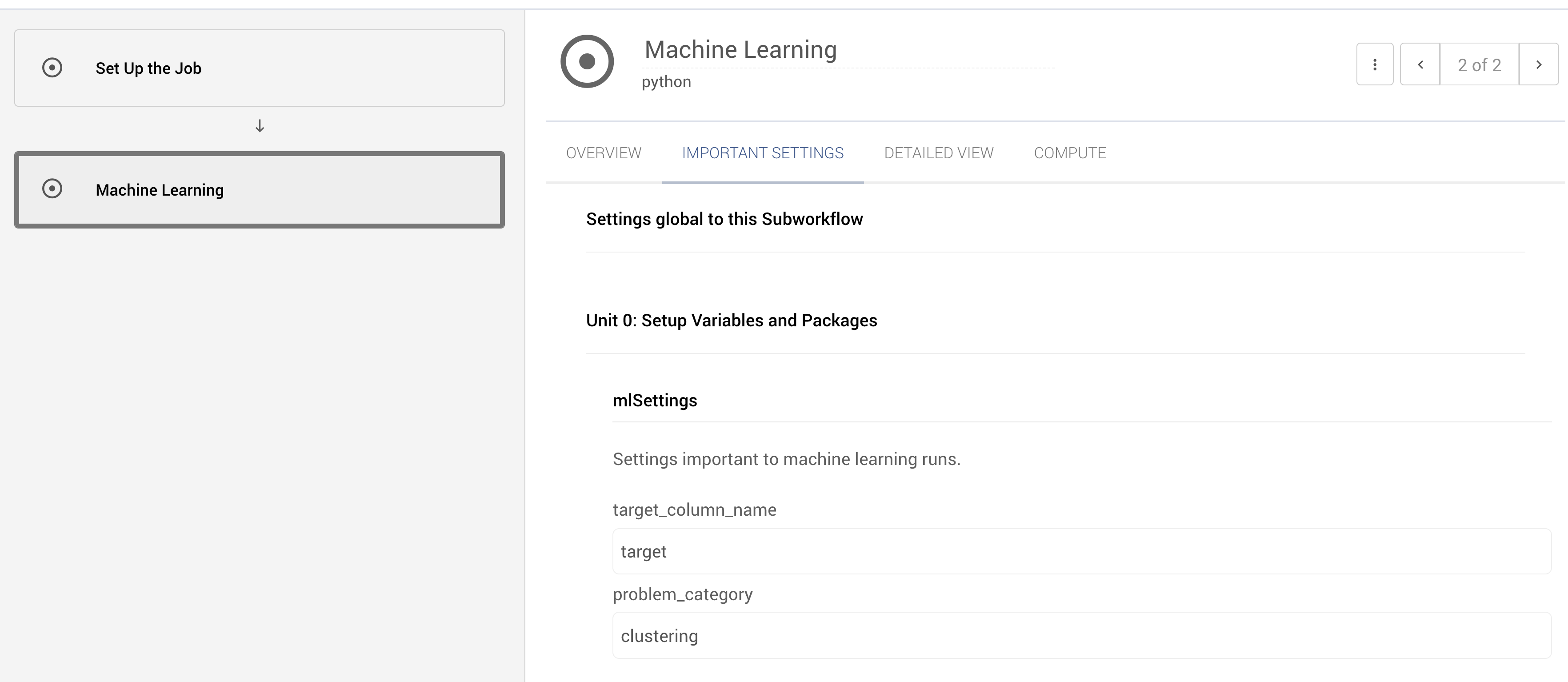

We have now chosen our ML workflow and training set. Select the Workflows Tab, and we can see our training workflow.

We can see two subworkflows available: Set Up the Job

and Machine Learning.

The Set Up the Job subworkflow contains instructions to copy in the training data.

A Word of Caution

The Set Up the Job subworkflow is automatically configured during the training process. Modifying it can disrupt

creation of the Predict workflow, leading to inaccurate results, or a failure to generate a predict workflow.

Select the Machine Learning subworkflow by clicking on it. The following workflow units should now be visible:

Setup Packages and Variables- Configures the job and downloads all required packages withpipData Input- Reads the training data from diskTrain Test Split- Splits the data into a training set and a testing setData Standardize- Scales the data such that it has mean 0 and standard deviation 1Model Train and Predict- Handles model training, and prediction2D PCA Clusters Plot- Draws a plot of the clusters in the training and testing set, projected onto the first two principle components 6 of the dataset.

We will begin by configuring our Machine Learning subworkflow. To begin, select the "Important Settings" portion of the

workflow editor. Then, set problem_category to "clustering" to state that we are solving a clustering problem.

By default, the workflow will split the dataset into 4 clusters. This can be configured within the

Model Train and Predict unit. We will click the Model Train and Predict unit to bring up the workflow unit editor.

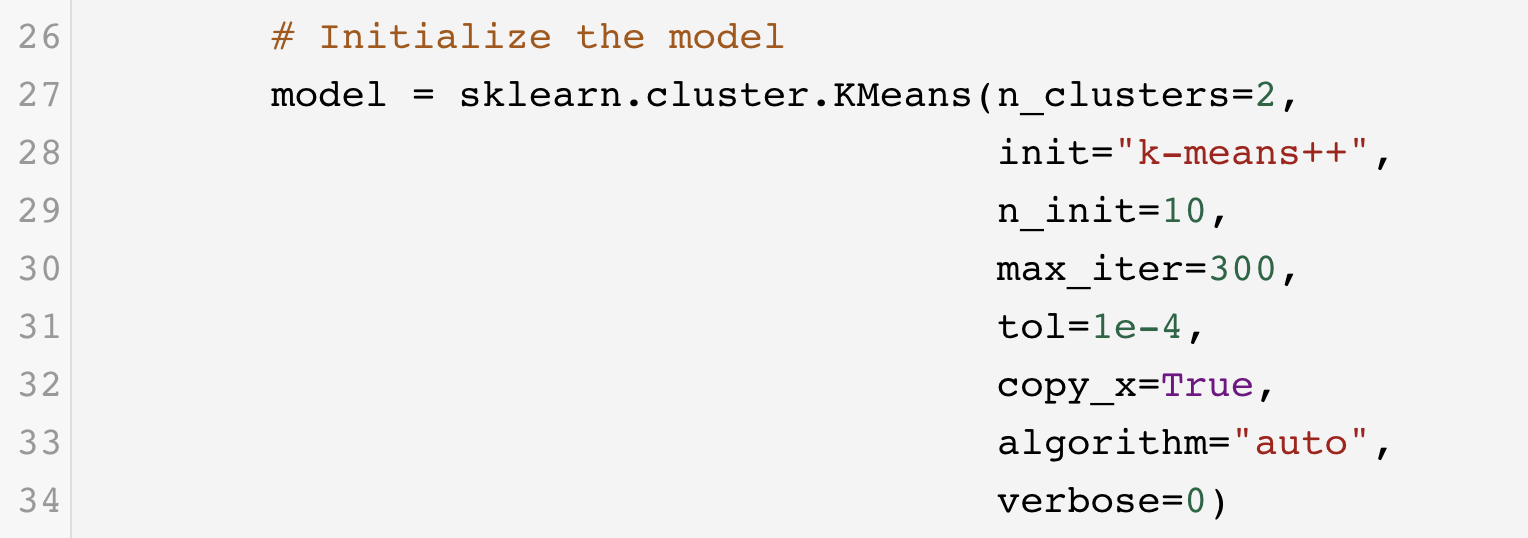

Then, scroll down to line 27, and change n_clusters from 4 to 2. Then, close the unit editor.

The workflow has now been configured, and we are ready to train.

7. Submit the Job¶

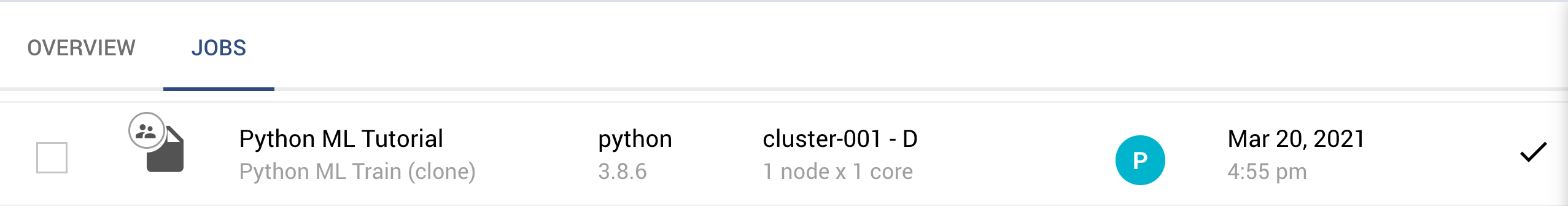

Click the check-mark in the upper right of the job designer, in the Header Menu to save the job. We now return to the job explorer page with the job in a pre-submission status

We can now run the job and wait for it to complete.

8. Analyze the Training Results¶

After a few minutes, the job will complete. We can then visit the job's results tab,

where we will see that two properties have been calculated. The first, Machine Learning - Model Train and Predict is

the predict workflow that was generated by the machine learning job. The predict workflow can be used to leverage the

trained model for additional predictions on new data. In the case of clustering, this means assigning new values to the

clusters identified by the model.

The second result visible is Machine Learning - 2D PCA Clusters Plot, which draws the clusters projected along their

first two principle components. Each color represents a different group. Circles represent the training set, and squares

represent the testing set.

Animation¶

This tutorial is demonstrated in the following animation: